I was walking Luna — my miniature pinscher, a tiny creature with the energy of a nuclear reactor — along the Rhône when it hit me. As usual, the best ideas don't come at a desk. They come when you're watching a 4kg dog try to fight a swan.

I'd been running a penetration test on my site (it-digest.info) the day before, and the analytics reports looked wrong. Too many hits. Internal traffic from my own testing IPs was polluting the data — both in the internal analytics module and, presumably, the external one. Self-referrals from the site's own domain were cluttering the referrer reports. The numbers I was looking at weren't real.

The fix was clear in my head: IP exclusion lists, self-referral filtering, a purge for the existing garbage data. I knew exactly what I wanted. I could not wait. And neither could Luna — she was already jumping at my phone, trying to catch whatever was glowing on the screen.

So I opened Claude Code in the browser, right there on the riverbank, and started a session.

// The Problem

After a pen test, your analytics are trash. Every probe, every automated scan, every manual check — they all register as page views. My internal analytics module was faithfully recording every single hit from my testing IPs. The referrer reports were full of self-referrals — www.it-digest.info showing up as a "traffic source" for it-digest.info. Useless noise.

The external analytics (GA4, Matomo) had the same problem, but those platforms have their own IP filtering settings. The internal analytics, though — that's my code, my module, my problem.

What I needed:

- IP exclusion list supporting both individual IPs and CIDR ranges — pen testing comes from known addresses

- Self-referral filtering — the site shouldn't count itself as a referrer, whether it's

it-digest.info,www.it-digest.info, orIT-DIGEST.INFO - A purge action to clean existing polluted data

- No changes to external analytics — those scripts must stay active and visible during pen testing

// The Session: Planning Phase

I described the problem to Claude Code and let it explore the codebase. It ran two agents in parallel to map the analytics system — internal module structure, external module hooks, middleware chain, theme template context.

Then it produced a plan. And the plan was wrong.

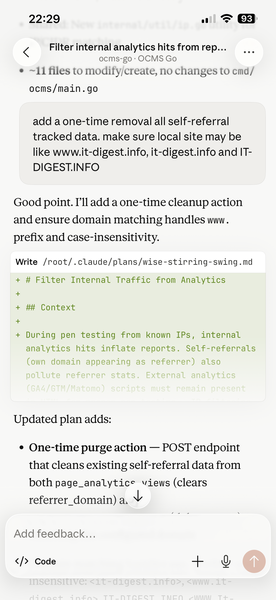

The first draft proposed filtering based on logged-in user sessions. That's not how pen testing works. You test from known IPs, not logged-in accounts. I pushed back:

"Wrong! No logged-in user exclusions! Pen testing is done from known IPs, so IP exclusions may be useful. Self-referral exclusions are useful as well."

Claude revised. The new plan had three features: IP-based exclusion for internal analytics, self-referral filtering by site domain, and a sync.Map/nonce trick for external analytics to conditionally suppress script rendering.

I pushed back again:

"How will external analytics IP exclusion work? If IP is in the excluded list, will analytics code be present and active? This is needed for proper pen testing."

Good point — you can't hide the GA4 script during a pen test and then claim the test was thorough. Claude dropped the external analytics code changes entirely and replaced them with an admin UI hint pointing users to configure IP filters in GA4/Matomo directly.

Then I added more requirements:

"Add a one-time removal of all self-referral tracked data. Make sure local site may be like www.it-digest.info, it-digest.info and IT-DIGEST.INFO."

And:

"Local domain is in .env and shell vars, yes?"

Claude found that site_url already existed in the config table — no need for a separate setting. It would extract the host, normalize www. prefix and case, and use that for matching. One less field to manage.

Finally:

"Add to plan: add/update tests, docs and translations."

Four rounds of feedback. Each time the plan got tighter. This is the part people miss about AI-assisted development — the AI doesn't get it right the first time, and it's not supposed to. The value is in the speed of iteration. Each revision took seconds, not hours.

// The Session: Implementation

With the plan locked, Claude started coding. Here's what happened, roughly in order:

- Shared utility first. A

MatchesIPList()function ininternal/util/network.go— takes a client IP and a list of IPs/CIDRs, returns whether it matches. Reusable by any module. 19 tests written and passing immediately. - Internal analytics module updates. New

ExcludeIPsfield in the settings model. Migration v8 adding theexclude_ipscolumn. ThetrackPageView()function now checks the client IP against the exclusion list before recording anything — excluded IPs are silently dropped. - Self-referral filtering. If the referrer domain matches the site domain (after

www.stripping and lowercasing), the referrer is cleared. The page view still counts — it's a real visit — but it won't pollute the referrer reports. - Purge handler. A POST endpoint that cleans existing self-referral data from both

page_analytics_viewsandpage_analytics_referrers. One-time cleanup with a confirmation dialog. - Admin UI. Textarea for the IP exclusion list, purge button, site domain displayed read-only from config. All wired up with templ components.

- External analytics. No code changes. An info hint in settings: "Configure IP exclusion directly in your GA4/Matomo dashboard."

- Translations. English and Russian locale files updated for both modules.

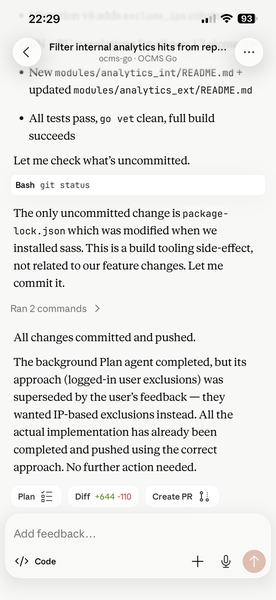

- Tests and docs. New tests for domain matching and URL extraction. Updated migration assertions. New README for internal analytics, updated docs for external.

Build compiled. go vet clean. All tests passed. Committed and pushed.

// What Made This Work

Claude Code in the browser. No terminal, no IDE, no SSH. Just a phone screen on a riverbank. The web version has the full toolset — file reading, editing, searching, running commands, spawning agents. Same engine as the local CLI, accessible from anywhere.

Iterative planning before any code. Four rounds of plan revision before a single line was written. Each round took maybe 30 seconds of my input and a few seconds of Claude's processing. By the time implementation started, the scope was tight and the edge cases were identified.

Pushing back matters. The first plan was wrong. The second had unnecessary complexity. The third missed domain normalization. The fourth was right. If I'd accepted the first plan, I'd have a feature that filters by login status — completely useless for pen testing.

Domain knowledge can't be delegated. I knew that pen test traffic comes from known IPs, not authenticated sessions. I knew external analytics scripts must remain visible during testing. I knew that www.it-digest.info and it-digest.info are the same domain. Claude didn't know any of this — I told it, and it adapted.

// The Numbers

One session. Phone screen. Walking a dog.

- 17 files created or modified

- 1 new shared utility with full test coverage

- 1 database migration

- 2 modules updated (internal + external analytics)

- 2 languages (EN + RU translations)

- 4 plan revisions before implementation started

- 0 lines typed manually

From "I have an idea" to "pushed to main" — done before Luna and I made it back home.

// The Takeaway

People keep asking me whether AI-assisted development is real or just hype. Here's my answer: I shipped a security-related feature to a production codebase from my phone while walking a dog along the Rhône. The feature has tests, translations in two languages, documentation, and a proper migration. It handles edge cases I specified and drops complexity I rejected.

Is it perfect? No — I'll review the diff properly at my desk. But it's solid, it's tested, and it's deployed. The alternative was waiting until I got home, opening GoLand, and spending an evening on what is, frankly, plumbing work. IP matching, SQL migrations, templ components, locale JSON files — this is exactly the kind of work that AI does well and humans find tedious.

The creative part — identifying the problem, rejecting wrong approaches, specifying domain-specific constraints — that was all me. On a phone. While a miniature pinscher tried to eat a pigeon.

That's vibe coding. And it works.

Oleg Ivanchenko builds oCMS, an open-source CMS in Go, and writes about backend architecture and AI-assisted development at it-digest.info. Luna remains unimpressed by all of it.