What: a single-binary Go service that posts Claude Code release announcements and a daily AI news digest to @it_digest_info on Telegram.

How: zero typed production code. Claude Desktop drafted the spec as a one-shot chat session. Claude Code then executed it, test-first, commit-by-commit. I reviewed diffs and pushed back on design choices — that's the whole author loop. Two surfaces of the same model, one human reviewer.

Why write this up: the "vibe-coding" label is often used dismissively, as if the result is a throwaway toy. This project is the opposite. It's a static binary, scheduled by systemd, with a pure-Go SQLite store, a hardened systemd sandbox, a closed security-audit trail, and ≥77% coverage in every critical package. The development process was 100% AI-driven and produced a shippable v0.1.0 in a single weekend. The point of the article is to show how that loop works — not to celebrate speed, but to show what the author's job actually becomes when the typing is delegated.

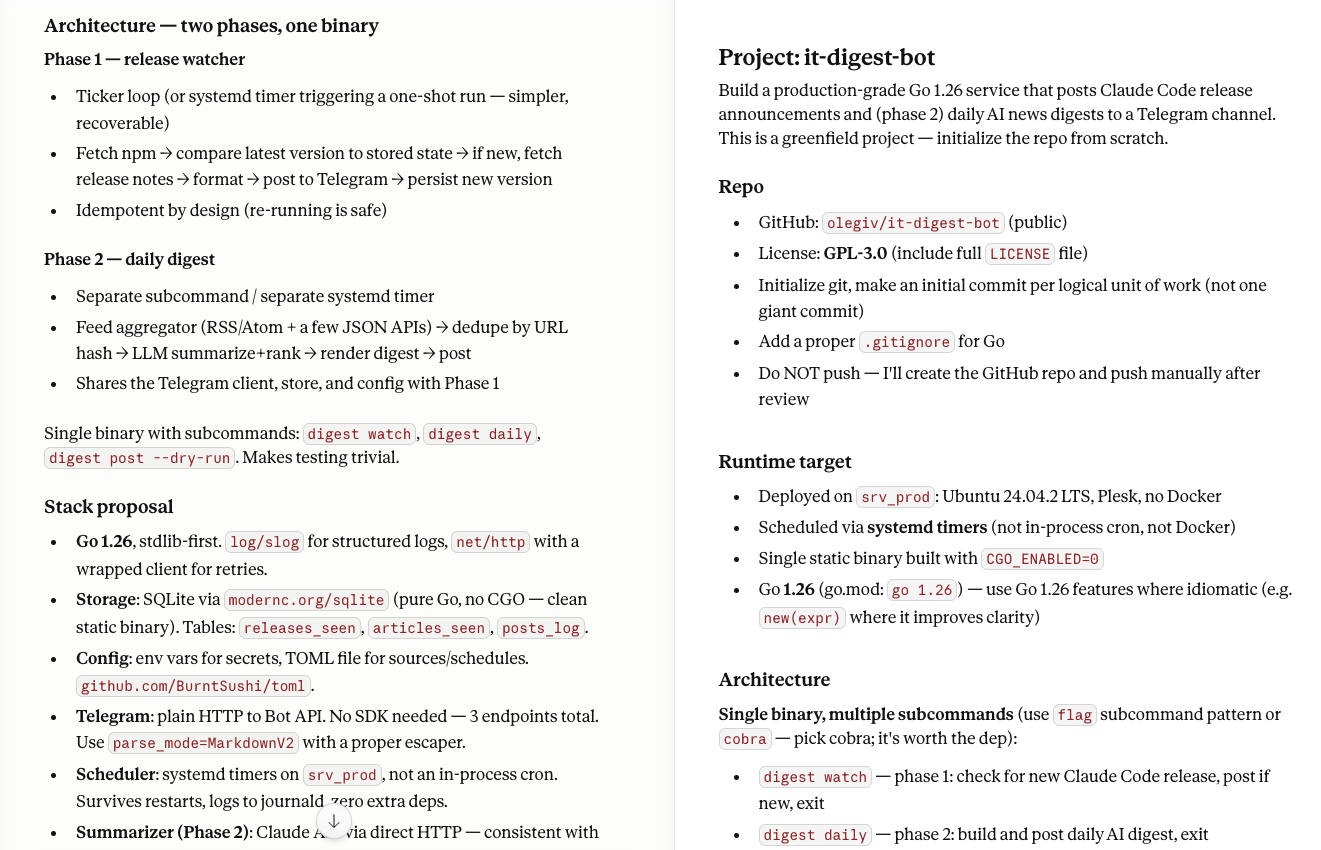

Step 0 — Write the spec before the first line of code

The first session of this project wasn't Claude Code at all. It was Claude Desktop — the chat app.

I opened a conversation there, described the thing I wanted, and let the model push back on architectural defaults. Out came a 350-line markdown document: repo layout, subcommand list, TOML config schema, SQLite DDL, systemd unit templates, a feed list for phase 2, a non-goals section, and a final instruction that closed with:

Start by asking me if I want to deviate on any of the above, then proceed autonomously.

That document is the input prompt to Claude Code. It lives outside the repo (gitignored), and in the following session I opened Claude Code in an empty working directory, pasted the spec, and let it run.

Why bother with a separate spec session? Because the quality of what Claude Code produces is almost perfectly correlated with the quality of the spec you hand it. If you open an empty directory and say "build me a Telegram bot," you get a Telegram bot. If you open an empty directory and hand Claude a document that says "single static binary, CGO_ENABLED=0, modernc.org/sqlite, systemd oneshot + timer, no Docker, no web server, no health endpoint, journald is enough," you get that Telegram bot.

Claude Desktop is the right surface for the spec work because it's a chat — you iterate, push back, change your mind, let the model propose alternatives. Claude Code is the right surface for execution because it has file tools, a real shell, git, and a plan mode. They complement each other. When I finally pasted the spec into Claude Code, the first line of its output was not code; it was a list of the small deviations it wanted to negotiate before it started:

A few notes on choices I made without explicit input from you — push back if you disagree:

- cobra for CLI. You said GUI > CLI as a preference but this is a CLI…

- systemd timers over in-process cron. Survives restarts, logs to journald, one less thing to get right in Go…

- modernc.org/sqlite (pure Go) over mattn/go-sqlite3. No CGO = clean static binary =scpand go.

- GPL-3.0LICENSEas full text, not SPDX reference. GPL legally requires the full text in the distribution.

- Instructs Claude Code NOT to push to GitHub. You'll want to create the repo on GitHub yourself…

Five small decisions, each justified, each negotiable. That's the tone you want from an autonomous coding agent. One back-and-forth, and then it was off.

The architecture in one breath

systemd timer (hourly) ───▶ digest watch ───▶ npm + GitHub + Telegram + SQLite

systemd timer (08:00 CH) ──▶ digest daily ───▶ 6 RSS feeds + Anthropic API + Telegram + SQLite

One Go binary (cmd/digest) with five subcommands. Each run is a one-shot: it starts, does its job, exits. There is no daemon, no HTTP server, no /health, no Docker. Idempotency comes from the SQLite store, not from in-process state. Re-running the same command twice is safe, because the second run sees the first run's rows in releases_seen or articles_seen and exits clean.

This matters for two reasons. First, it's operationally boring: systemctl status it-digest-watch.timer is the entire dashboard. Second, it's trivial to test: each subcommand is a pure func(ctx, config) error, so the tests never have to stand up a server.

Phase 1 — the release watcher

The bot's first job: watch for new Claude Code releases on npm and post them to Telegram.

The flow, in the order it appears in internal/claudecode/watcher.go:

GET https://registry.npmjs.org/@anthropic-ai/claude-code, parsedist-tags.latest.- Look up

(package, version)inreleases_seen. If present, exit clean. - If new, fetch release notes from

api.github.com/repos/anthropics/claude-code/releases/tags/v<version>. If GitHub returns 404 (common right after a publish — the npm tag appears seconds before the GitHub release), fall back toraw.githubusercontent.com/anthropics/claude-code/main/CHANGELOG.mdand parse out the section for this version. - Format to Telegram MarkdownV2.

sendMessage.- Record

(package, version, posted_at, tg_message_id)and exit.

The non-obvious part is step 4. Telegram's MarkdownV2 is not the Markdown you know. Every ., !, -, +, =, (, ), [, ], {, }, >, |, #, ~, _, *, and ` must be backslash-escaped outside of code spans and link syntax. Inside a code span, only ` and \ need escaping. Inside a link URL, only ) and \. Inside link text, the regular rules apply again. Get one wrong and the Bot API rejects the whole message with a cryptic can't parse entities error.

So internal/telegram/markdownv2.go is a small state-machine escaper (291 lines including tests) with table-driven test cases covering backticks inside release notes, bullet points, issue links, and the kind of adversarial whitespace you get from auto-generated changelogs. The test file is the longest part of the telegram package — on purpose. This was the single most fiddly piece of the whole project, and I had Claude Code write the tests before the implementation, then iterate on failing cases.

Phase 2 — the daily digest

Every morning at 08:00 Europe/Zurich, digest daily runs. It:

- Fetches six RSS/Atom feeds in parallel under

errgroup(internal/news, usingmmcdole/gofeed). - Computes a canonical URL hash (lowercased scheme + host, normalized path, no fragment, sorted query-string keys with tracking params dropped) and dedupes against

articles_seen. - Sends the 24–48h window to

POST https://api.anthropic.com/v1/messageswith a system prompt that asks Claude to rank and summarize for a builder-oriented audience (source priority: Anthropic > OpenAI > others; Ollama and LM Studio get tier-3 weight; editorial aggregators get ranked below developer-community sources). - Renders the result to MarkdownV2, grouped by source.

- Splits into chunks under Telegram's 4096-byte cap if needed, never breaking a paragraph, a code span, or a link.

- Posts each chunk sequentially, recording

message_idper chunk.

The lookback window defaults to 48 hours, not 24 — because some feeds stamp items at 00:00 UTC and a strict 24h window silently drops them right on the boundary. The articles_seen.url_hash dedup makes the wider window safe: an item that's already been posted won't be posted again.

The prompt tuning alone took six commits — 6c1074a Tune digest prompt for builder-oriented signal, ba0907a Add source priority, 75ae1a3 Rank developer-community sources above editorial aggregators, 56baedf Give Ollama, LM Studio, and runtime tooling tier-3 weight, and two more. Each commit was a one-line prompt change plus a snapshot of the rendered output in the PR description. This is the part of AI-assisted work that is human judgement: the model can build the plumbing, but calibrating what "relevant to a builder" means is a taste call you have to make yourself.

Three design patterns worth lifting into your own projects

1. The dry-run contract

Both claudecode.Watcher and digest.Builder expose the same two fields:

type Builder struct {

// ...

DryRun bool

DryOut io.Writer // defaults to os.Stdout

}

When DryRun is true:

- The fully-rendered output is written to DryOut.

- The Telegram send is skipped.

- All DB writes are skipped.

That last one is the important part. A dry-run that writes to releases_seen would only be dry the first time — the second invocation would see the row and short-circuit. By skipping DB writes, the same dry-run can be repeated indefinitely and always produces the same output. This is what lets make dry-watch and make dry-daily run safely against the production server against live config, live feeds, and a live DB, with zero side effects. It's not a quality-of-life feature; it's a safety invariant.

2. The URL sanitizer pattern

The Telegram Bot API puts the bot token in the URL path: https://api.telegram.org/bot<TOKEN>/sendMessage. If httpx.Client runs out of retries and returns an error, the default %w-wrapped message contains the full URL — and therefore the token. url.URL.Redacted() only redacts userinfo, not path. So we install a per-client sanitizer:

// In telegram.New:

b.http.SetURLSanitizer(SanitizeURL) // masks /bot<TOKEN>/

// In news.NewFeedFetcher:

h.SetURLSanitizer(SanitizeURL) // strips userinfo, query, fragment

Both call sites install the sanitizer unconditionally on whatever *httpx.Client the caller passed in. This is a mutation. It's documented. And it forces a discipline on the callers: because installing one sanitizer overwrites the other, cmd/digest/watch.go and cmd/digest/daily.go construct separate httpx.Client instances per API — one for the Telegram client, one for the feed fetcher, one for everything else. If you share, one constructor's sanitizer stomps another's, and the next retry-exhausted error leaks credentials.

I would not have written this pattern on my own — I'd have reached for Redacted(), shipped, and leaked tokens in the first journald line of the first failed retry. Claude caught it during review on commit e646769 Redact Telegram bot token from retry-exhausted errors, and then generalized the pattern across feeds three commits later in ffee424 Harden sanitizer wiring.

3. SQLite serialization is not optional

internal/store/store.go opens the DB with journal_mode=WAL, busy_timeout=5000, and then:

db.SetMaxOpenConns(1)

One connection. SQLite handles concurrent reads fine in WAL mode, but it serializes writes — and trying to write from two connections simultaneously produces SQLITE_BUSY errors that propagate up as flaky failures. With SetMaxOpenConns(1), Go's database/sql queue does the serialization for you, and you never see a busy error. The CLAUDE.md committed to the repo calls this out explicitly: "do not raise this." Future-me has been warned.

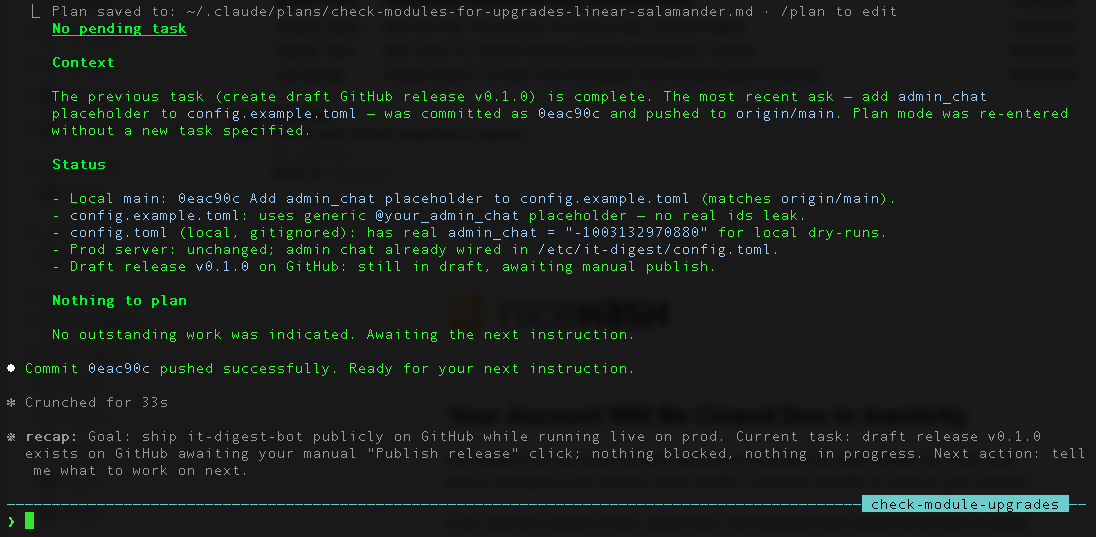

The AI-assisted workflow, honestly

The commit graph is 54 commits across roughly 48 hours of wall-clock time. It's not 54 hours of coding — it's maybe 6 hours of my time, split across two sessions, directing Claude Code. Here's what the author loop actually looks like:

- Pick a phase-sized bite. "Implement phase 1." Not "build the whole thing." Claude Code is good at bounded tasks; it's mediocre at open-ended ones.

- Let it draft. Watch it propose the plan (plan mode), push back on pieces you disagree with, then let it go.

- Read every diff. This is non-negotiable. The agent will produce code that compiles, passes tests, and subtly drifts from your intent. You catch that by reading, not by trusting.

- Write one-line commits. The git log is the progress bar.

31299ce Implement Claude Code release watcher (phase 1),4b792a4 Implement phase 2: daily AI news digest,eb8d072 Flesh out README with architecture and install docs. Each commit is one concern. If Claude wants to bundle two into one, you split it. - Iterate in small diffs.

47f8b16 Widen digest lookback to 48h and make it configurable— one commit, one idea, one revert button. The speed gain from AI doesn't come from writing a 2,000-line mega-commit; it comes from shrinking the ship-review-revise loop. - Keep a

CLAUDE.md. The repo has a committedCLAUDE.md(mirrored toAGENTS.mdfor other agents) that documents architecture, package boundaries, and hard constraints. Every new session starts with Claude Code re-reading it. Without this file, the agent re-derives the conventions every session and occasionally drifts; with it, the conventions are load-bearing.

What didn't work as well:

- Prompt tuning is still your job. The digest prompt went through six commits because "rank for a builder audience" is a taste call, not a fact. No agent can calibrate that for you — you have to see the output and push back.

- Security review needs a dedicated agent. I wouldn't have caught the URL-token leak on my own. I also wouldn't have caught it if I'd asked the same model that wrote the code to review the code. I used a separate security-auditor agent (

.claude/agents/security-auditor.md, committed) with a different prompt orientation, and it surfaced 1 HIGH, 4 MEDIUM, and 4 LOW findings — all of which are now closed in source before v0.1.0. Seeefc4543 Close LOW findings from 2026-04-18 security auditand the MEDIUM commit immediately before it. - Ops work is where agents shine. The

deploy/directory —deploy.sh,rollback.sh,backup.sh,status.sh,dry-run.sh,uninstall.sh, and six systemd units with a full sandbox (NoNewPrivileges,ProtectSystem=strict,SystemCallFilter=@system-service,RestrictNamespaces, the whole litany) — was cranked out in one session. This is the kind of boilerplate that you normally procrastinate on for weeks because writing it yourself is tedious. An agent has no procrastination.

The security hardening arc

Three things I'd highlight from the audit trail, because they're easy to miss in your own projects:

- Every SQL statement is parameterized. No string concatenation into query text anywhere in

internal/store/. Boring, audit-closing. Do this. - Feed HTML goes through

golang.org/x/net/html, not a regex stripper.<script>and<style>subtrees are dropped so adversarial markup in a feed can't smuggle instructions into the LLM prompt. Prompt injection via RSS is not theoretical. - Systemd sandbox. The production units run as an unprivileged

it-digestuser with a read-only filesystem except/var/lib/it-digest/and/var/backups/it-digest/, no network access for the backup unit, and a syscall allow-list. If the binary gets RCE'd, the attacker gets a sandbox with no network and no disk.

These are not things an AI volunteers unprompted on the first pass. You surface them by running an audit as a separate step, with a prompt that orients the model toward adversarial thinking. That prompt lives in .claude/agents/security-auditor.md and gets updated as the surface area grows (see 3b43701 Update security-auditor agent with new audit surfaces).

Ops: the boring-is-good part

The bot runs on one Ubuntu 24.04 box behind Plesk. There is nothing interesting about the deploy. That's the point.

- Push:

make deployruns the race tests, builds a static PIE binary targetingx86-64-v3, preserves the current binary asdigest.prev,scps the new one, chmods, and exits. The next timer fire uses the new binary. No downtime because there's no daemon to restart. - Rollback:

make rollbackswapsdigest↔digest.prev. The failed binary is kept asdigest.failedfor inspection. - Backup: a third systemd timer runs at 03:00 Europe/Zurich, does

sqlite3 -readonly .backup→ gzip →/var/backups/it-digest/state-<UTC>.db.gz, prunes files older than 14 days. Fires before the 08:00 daily digest, so the backup is always of a consistent state. - Failure alerts:

OnFailure=it-digest-notify@%n.serviceis wired on all three timers. If a run fails, a hiddendigest notifysubcommand posts to the admin Telegram chat with host, timestamp, unit name, and the exit code. This took three commits to get right (1ed9306,9719b61,4f8f447 Harden notify service with the syscall denylist).

Takeaways

For anyone starting a similar project:

- Write the spec in a separate session before the code session. Claude Desktop for the chat-shaped spec work, Claude Code for the execution. The context switch between surfaces is the whole point — it forces you to commit the spec to writing before any file gets created.

- Commit a

CLAUDE.md/AGENTS.md. Architecture, package boundaries, hard constraints, naming conventions. If you wouldn't want a new junior engineer to re-derive it on their first day, write it down. - Use multiple agents with different prompts. The coder, the security auditor, and the reviewer are three different jobs. Don't ask the same agent to do all three.

- Read every diff. The speed-up is real. The attention you save on typing goes straight into review. Budget accordingly.

- Keep the binary boring. Static, one-shot, systemd-scheduled. The fewer moving parts, the less surface area for AI-generated code to misbehave.

The repo is public at github.com/olegiv/it-digest-bot. The Telegram channel it publishes to is @it_digest_info. The v0.1.0 release notes are in CHANGELOG.md. Everything is GPL-3.0. Patches welcome.